Entropy is the general trend of the universe toward death and disorder.

— James R. Newman

The concept of entropy, the inevitable descent of any system into chaos, is highly relevant to modern businesses. Left unchecked, the growth of headcount, the introduction of new technologies and systems, and the dynamics of supporting customers in an increasingly interconnected world—among other factors—push even the greatest organizations towards a state of disorder.

Despite our best efforts, data driven organizations are unfortunately some of the most likely to fall prey to entropy. The rise of the modern data team, and the mandate to make data central to every facet of these businesses, and every decision, means that data and analytics assets are more available than ever before.

At these companies, data is consumed across every function, with every employee—from executives, to middle management, and even front line employees—challenged to back up decisions with analysis. Data is analyzed, reported on and consumed across sometimes dozens of systems, resulting in not just hundreds or thousands, but often tens of thousands of dashboards, reports and one off pieces of analysis.

With this many data assets, organizations run the risk of undermining the very process of data driven decision making they are trying to support. End users will spend more time trying to figure out what data to use and how to make sense of this environment, and less time actually using the data to drive results. Meanwhile data teams are left with an increasingly out of control sprawl of assets to manage.

Cause #1: exponential growth in the number and types of analytics tools

Becoming more data-driven is one of the key trends in how organizations are operating and organizing themselves. A 2021 survey found that 83% of CEOs wanted their companies to be more data-driven. But as data-driven has transitioned from an aspirational goal to a core operating philosophy for many organizations, the tools teams use to create and consume data have exploded in number and scope.

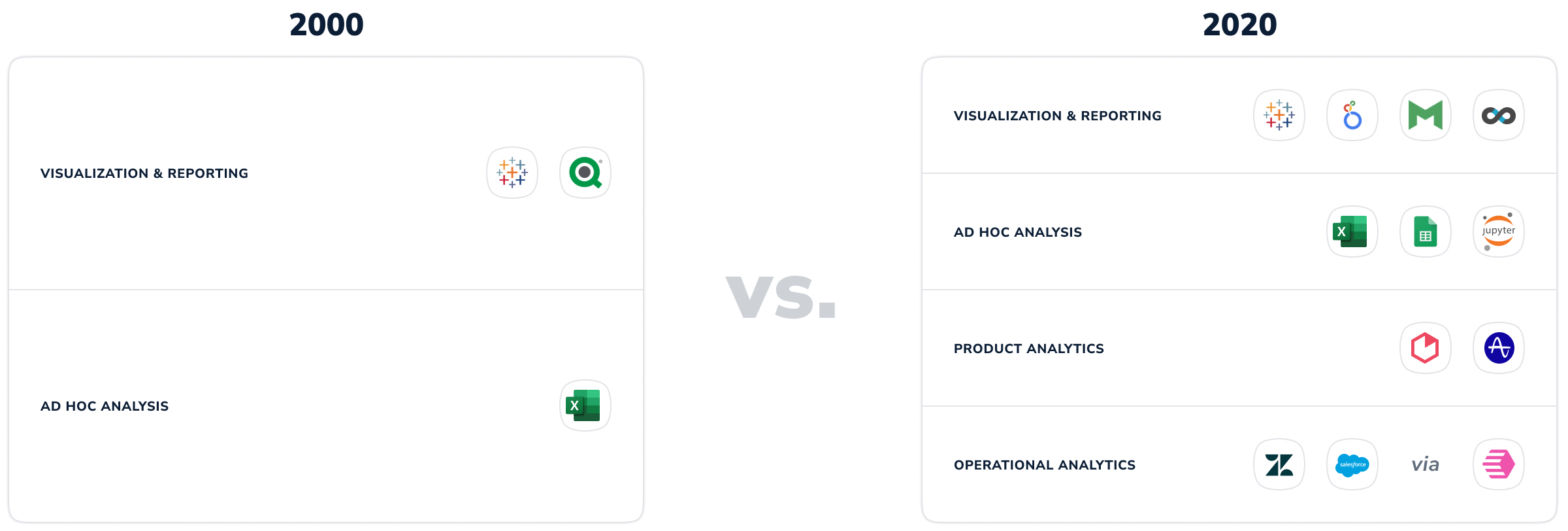

Consider the below, which charts some of the core analytics tools and categories used in 2000 versus 2020:

While illustrative, this chart undersells the Cambrian expansion of analytics tools available to companies, as well as the number of categories targeted at different analytical jobs to be done. This exponential growth shows that organizations are increasingly adopting multiple tools, normally with unique jobs to be done, and different target audiences in mind.

The result? As you may have guessed, is entropy.

Cause #2: analytics is now core to every part of the business

But the sheer number of tools is not the only factor to blame. The analytics teams charged with maintaining company data are increasingly ubiquitous, and serve as the decision-making nerve center of their organizations. Analytical competency is no longer the domain of a centralized team; increasingly key analytics support is becoming embedded within every function—think Revenue, Marketing or Business Operations teams. Data is being used by more and more end users, with ever greater pressure heaped on the teams that supply them with that data.

As reliance on these teams mounts, so do the stakes of data entropy. Stakeholders from every part of the business need to know if the data they are using is accurate, complete, and timely. A centralized data team might have a good sense of what assets are available to address a given problem, but most employees within an organization will not know where to find the necessary data, or even worse, might not even know that it exists.

Cause #3: knowledge asymmetries undermine data effectiveness

This leads us to the final root cause of data asset entropy. When tools are numerous and specialized, there will inevitably be large knowledge asymmetries between data builders and data consumers.

These asymmetries cut both ways. Data teams have more advanced analytical skill sets and context around the data that is not available to other parts of the business. In contrast, operational teams have function-specific skills and understanding of how their teams operate, as well as a greater knowledge about how data assets are used in their functions day to day. Such an environment is deadly to effective knowledge sharing and signaling, with the end result that data is no longer an effective guidepost to the organization, but is instead a roadblock to efficiency.

Modern BI tools have made it so easy to create and share analytical assets, dashboards and charts…and really those things are pieces of software. And they say with any software 80% of the cost of building software is actually in maintaining it. No one is actually maintaining these analytical assets full-time.

They're not treating them like true products. There is a lot of talk of data as products. There is hardly any maintenance, there's no observability downstream from actually creating that asset.

— Ted Conbeer, Chief Data Officer at Privacy Dynamics

With the growing sprawl of assets from sometimes dozens of different systems, organizations’ data environments begin to look increasingly fractured. This impacts both data consumers and data builders, but does so in different ways.

For data consumers, a data environment with a high degree of entropy reduces the value and efficiency of their interactions with data. They will likely experience a lot of uncertainty about what tools or reports to use, or choose to go it alone with their own reporting. This not only slows down their ability to incorporate data into their decision-making, but may in fact result in using the wrong information and therefore coming to the wrong conclusions. Just as with data models, data consumers and their decisions aren’t exempt from the old adage: garbage in, garbage out.

There is a more human element to this equation as well. As the ability to access the correct information becomes more of an issue, there will be less trust between the teams who put the data together and the teams who consume them. Distrust compounds over time, meaning that even if your analytics team is putting together exactly the content needed to support the business, the business may not take full advantage of it.

"Asking what is my canonical sales pipeline and I get 15 different answers from 15 different dashboards, potentially with materially different results driving different decision making. It's a key problem. It's a technology problem and a process and people problem."

— Jamie Davidson, Co-founder, Omni

On the data builders side of the organization, the costs are no less damaging. When entropy takes hold, analytics teams get stuck filling in the gaps. A barrage of questions about what to use, where to find things, and how to interpret it, are not remotely conducive to the deep work analytics professionals need to build data products and execute long term initiatives. Entropy is a vicious cycle, miring analytics team in a perpetual state of maintenance and support rather than building and innovation.

At the end of the day, of course, the entity that suffers most from the inability to rapidly incorporate data into decision-making is the organization at large.

Though it has been a largely intractable problem to this point, there is hope when it comes to managing data asset entropy. Building on the strategies teams have employed in the past, a new breed of Data Asset Management solutions provide the tools analytics teams need to slow down entropy. We will explore how these platforms work, and what core features are most important to implement in our next article in this series.

If you have enjoyed reading, and would like to continue following our exploration of how teams capture, curate and disseminate knowledge about their data, subscribe to our Data Knowledge Newsletter.

Receive regular updates about Workstream, and on our research into the past, present and future of how teams make decisions.